Increasing Student Engagement and Assessing the Value of an Online Collaboration Tool: The Case of VoiceThread

Solomon Negash, Kennesaw State University

Tamara Powell, Kennesaw State University

Introduction

Engaging students in an online course is a challenge. Students often report that online courses are “death by discussion board,” which leads to the conclusion that at the lower levels, at least, university students do not find discussion boards all that engaging. Students indicate it is hard to have discussions on topics they are just now learning, and they really don’t care to read and respond to their peers’ uninformed opinions regarding discussion topics. Given the enormous amount of time it takes to respond to, supervise, and grade discussion boards, one wonders why instructors are working so hard on something that doesn’t seem to be very effective at engaging students in learning.

The lack of student engagement in online discussion board assignments has a multiplicity of causes. Any meaningful question with regard to an assignment usually has a handful of correct answers. For example, in an introductory American literature course, students might be asked to explicate a story with regard to a particular theme. In a real-time, face-to-face discussion, at least half the class could actively engage in dialogue as the instructor facilitated. But on an asynchronous discussion board, on which every student needs an equal opportunity to participate for an individual grade, such a question means that a handful of students get prime territory to answer, and the rest must either scrape up an answer out of what they can find that is left in the story to discuss, or somehow graft their responses onto another student’s while still trying to get points for being original. The authors find that this condition fails to provide an equal playing field for every student where a graded assignment is concerned. As a result, the authors have experimented with open-ended discussion questions on discussion boards, such as “If you had to pick the most important author in American literature, who would you choose and why?” To the authors’ chagrin but not their surprise, student evaluations complained about these types of questions as “busy work.”

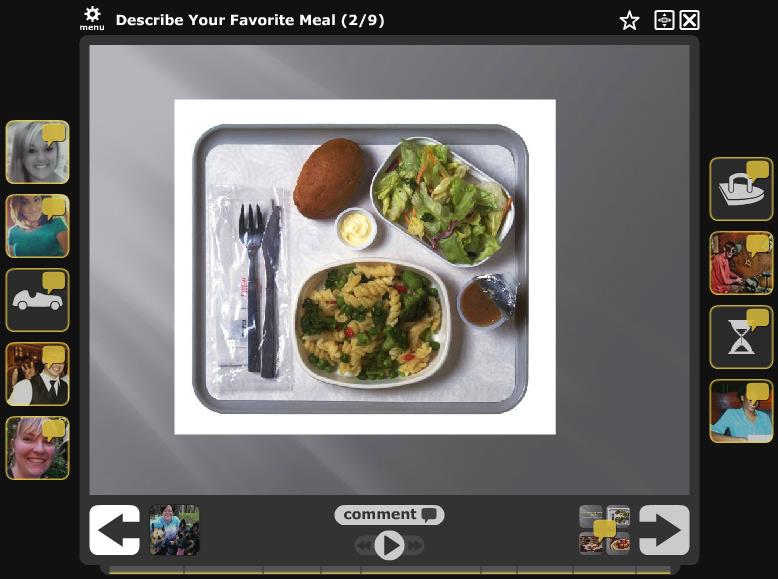

After further experimentation and student feedback regarding the online discussion board in a literature course, the VoiceThread tool was utilized to assist in leveling the playing field for online student discussion. The authors first heard of VoiceThread from former students who became teachers and used the tool themselves. The tool was recommended as one that could provide a platform for meaningful interaction with more robust features than a discussion board. On a VoiceThread, for example, students can type comments as on a discussion board. However, unlike most discussion boards, they can also create audio and video comments and doodle with some basic tools. VoiceThread is also different from a discussion board because it places students’ comments around an image. Students can see a visual representation of themselves and their classmates discussing a topic (see Figure 1).

In the first attempt in this study to use VoiceThread successfully as an online discussion tool in an American literature classroom, a giant PowerPoint was created with every reading assignment getting its own slide. The PowerPoint was then loaded onto the VoiceThread platform. Students were provided with the following instruction:

Throughout the semester, please comment extensively (five well-developed sentences each) regarding your insights and intellectual thoughts on at least five readings. Your contributions must be WHOLLY your own. If you know something, share it, but do not cruise the internet to craft a contribution. Finally, respond in two sentences or more to at least ten of your colleagues’ comments.

At the end of the semester, it might seem like the result was a great success judging by the number of responses, student ideas generated, student engagement, and depth of participation. The sad truth is that most of these postings were added the last day of class. And evaluations reflected that students felt this assignment was meaningless. A meaningful, intellectual discussion among 40 people on five or more topics doesn’t happen in 24 hours of frantic, last-minute posting. One might ask, “Why bother?” From the student evaluations, it seems that students don’t care whether they are engaged in their online course. However, the researchers care, and from this concern flows the current effort to understand how to increase student engagement and to evaluate whether students find value in online collaboration tools.

The big difference between face-to-face and online discussion is that face to face discussion is ephemeral and, usually, ungraded. It generally starts with a broad topic and moves quickly. Also, not everyone has to participate for a discussion to be deemed successful. Online, students cannot hide. Each student needs an equal opportunity to participate and earn points. In an online course with 40 students, how does that happen? And how does an instructor make it engaging? In a face-to-face class the instructor may ask a variety of questions and may even have each student get a question and provide an answer as a sort of concept “check-up.” That activity can be replicated in an online course. But simply posting 40 questions for 40 students to answer isn’t necessarily student-student interaction. Students will likely answer a question and move on to other items in their hectic lives. In addition, it is often challenging to produce discussion responses that are real or meaningful. In fact, the authors find that more often than not, students veer off into hazy, uncharted, and often off-topic territory that often costs rather than earns points. The authors use VoiceThread as the online tool for this research and conduct a qualitative and quantitative study to address the research questions.

In the subsequent sections we present a literature review, methodology, two discussion sections on qualitative and quantitative research methods, and a conclusion. Our qualitative and quantitative research on the use of VoiceThread in the college-level, online classroom helps to bridge the literature gap in the evaluation of web 2.0 tools for college-level online teaching.

Literature Review

VoiceThread is very popular in elementary and high school classrooms. Research has been reported on the use of VoiceThread in various disciplines including math, reading, history, and nursing. Brunvand and Byrd praise VoiceThread for engaging students and holding the attention of students who are easily distracted or who have other learning challenges. Bomar notes the more engaging advantages VoiceThread has over other presentation tools.[ii] According to Bomar, VoiceThread gives students multiple ways to use media in crafting their literature presentations and offers students a sense of audience that they don’t have in other presentation formats. Further, Bomar posits that with VoiceThread, students’ success in completing the assignment increases. Siegle praises the versatility of the tool: “The beauty of VoiceThread is the variety of formats comments can take. Some students may prefer to type their comments; others might be more comfortable recording video responses.”[iii]

For the most part, the research on VoiceThread comes from elementary and high school applications. However, Harris finds that in the nursing program at Bronx Community College, kinesthetic learners are drawn to VoiceThread because the technology “can be connected to reality and allow for application of theory. The experience is what stimulates the learning for the kinesthetic learner.”[iv] Harris also uses the tool to perform group diagnostic exercises. Ng finds that VoiceThread is one of several tools that can help to “teach [university students who are] digital natives” how to evaluate and apply digital tools meaningfully.[v] Bran investigates VoiceThread for collaborating with universities on other continents and finds it to be a successful tool that contributes to more meaningful learning experiences in the college language classroom.[vi] As can be seen, there is much anecdotal evidence on the successful use of VoiceThread, but there is very little evidence-based research. Hew and Cheung point out that “actual evidence regarding the impact of Web 2.0 technologies on students learning is as yet fairly weak,” and actual evidence from university teaching is even harder to find.[vii] The current research contributes to our understanding and bridges the literature gap by providing qualitative and quantitative support for the use of VoiceThread in the college-level, online classroom.

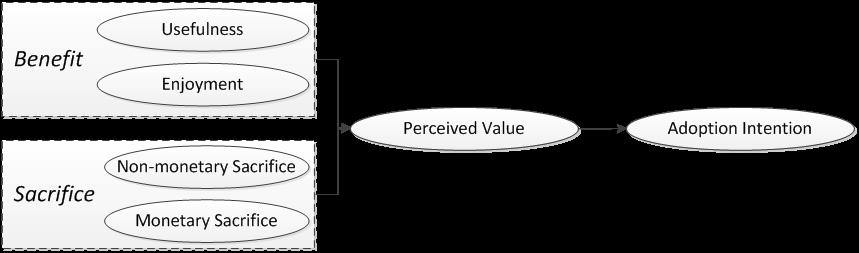

Kim, Chan, and Gupta developed a theoretical model for assessing the value of a technology,[viii] depicted in Figure 2. This model uses the benefits and sacrifices of users to understand a user’s perception of the technology and his/her adoption intention. Compared to the traditional Technology Adoption Model[ix] the value-based adoption model shows significant improvement in determining intention.

In the current model the researchers adopt the value-based adoption model of technology. The researchers use the validated instruments from the Kim, Chan, and Gupta model and make only contextual changes to assess the student value of the online collaboration tool, VoiceThread. The study setup and results are discussed in the Quantitative Analysis and Discussion section below.

Methodology

The authors applied both qualitative and quantitative methods. The study was done at a large university with over twenty-five thousand students in the southeastern region of the United States of America. After initial, introductory research in an American literature course, the current research was performed with two courses: an English course and an Information Systems course. The English course is an introductory technical writing class; the authors used structured questions, focus groups, and student feedback to understand student engagement and how students use the collaborative tool. The Information Systems course is an introductory programing logic course; the authors used survey questions after the students used VoiceThread in their classroom to understand if students found the collaborative tool valuable. The authors discuss the results of the two approaches (qualitative and quantitative) in the following two sections.

Qualitative Analysis and Discussion

In the technical writing class students are given a series of assignments called the “Correct or Scooped” game. From student feedback the authors knew that students often view online courses as walls of text that they don’t always take time to read carefully. To help facilitate student success, the game is kicked-off with very clear directions. (A sample scene from the directions appears in Figure 3.) In addition, to make the directions stand out, the instructor uses Make Beliefs Comix (http://www.makebeliefscomix.com/) to create directions in a comic strip so that the content will be more enticing for students to read carefully.

The “Correct or Scooped” game has seven rounds throughout the semester. There is one round for each of seven key lessons that we cover in an introductory technical writing course: logical argument, definition and descriptions, audience analysis, paragraph organization, ethical dilemmas, correct writing, and effective graphs and graphics. For each element, a VoiceThread is created with enough slides so that each student can pick an example and show what he or she knows. That means that if there are 35 students in a class, then 40 “game pieces” or slides are created for each game. The assignments are delivered during appropriate and limited time frames throughout the semester and graded immediately afterward. Students are not allowed to wait until the last week of class to complete the assignments.

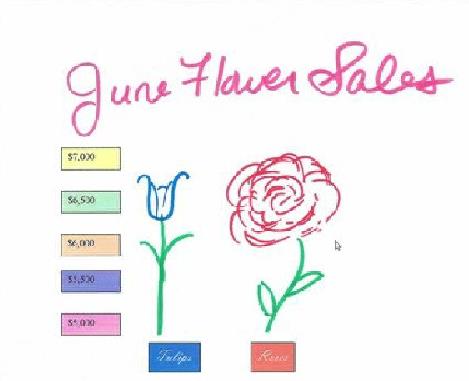

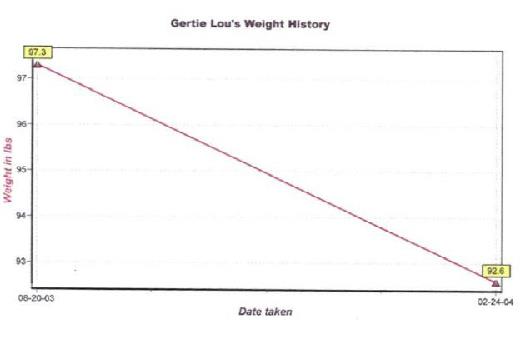

At different times during the semester, students are given a weeklong period to play the game. For example, when correct use of graphs and graphics is discussed, students will be assigned the Graphs and Graphics Correct or Scooped game. At any point during the week of play, a student may enter the Graphs and Graphics Correct or Scooped VoiceThread and pick one of the available game pieces: that is, slides on the VoiceThread not already used by another student. During the graphs and graphics round, for example, a class of 35 students receives 40 slides with different problematic graphics. Two examples of slides/game pieces can be seen in Figure 4.

Figure 4. Two slides from the Graphs and Graphics Correct or Scooped Game:

Figure 4. Two slides from the Graphs and Graphics Correct or Scooped Game:

June Flower Sales (above) and Gertie Lou’s Weight History (below).

As illustrated in Figure 3 the “Scooped” part deals with assessment. Suppose JoAnn sees that no one has yet “played” the June Flower Sales slide. JoAnn then chooses to address the problems in the June Flower Sales slide. If JoAnn provides the correct answer, she receives 10 points. However, if JoAnn provides a wrong answer on her slide, then she receives no points. In addition, when Lisa enters the game, she is motivated to peruse everyone’s answers in case someone got something wrong, and she can correct them and get credit. Lisa sees JoAnn’s mistake and provides the correction. Lisa gets the 10 points for JoAnn’s slide. But Lisa also gets to play a slide herself. She chooses the slide “Gertie Lou’s Weight History” and provides the correct answer. Lisa gains 10 points for the correct answer plus 10 bonus points for “scooping” JoAnn. Therefore, an element of competition is introduced. But JoAnn will get a chance to earn her points back because there are seven “Correct or Scooped” games during the semester. Lost points can be made up in a later round. Finally, since each student can choose which example to respond to—there are 35 students and 40 examples in each round—there is little ground for a student to complain that he or she does not have a fair chance.

Also, the Correct or Scooped Game allows the instructor to give each individual student the opportunity to demonstrate his or her skills in front of the class. This opportunity is an improvement over how the various correlating activities would proceed in a face-to-face classroom. In the face-to-face classroom, a few examples could be presented on the board for students to solve in front of the class, or students could be put in groups to practice identifying paragraph organizational patterns, for example. Time constraints prohibit allowing every student an individual chance to take a turn at the board.

In the online technical writing class, paragraph organization assignments are also often challenging. With the current online course research using VoiceThread, the authors face the problem using the Correct or Scooped Game. As a result, each student is presented with a turn at identifying organizational patterns. In the Correct or Scooped Game for Organizational Patterns, 40 different “game pieces” displaying 40 paragraphs with the sentences in the wrong order are presented to the students for their activity. Students choose one of the paragraphs that has not yet been attempted or “played” and use the doodling function in VoiceThread to show what order the sentences should be in and what strategy (cause and effect, chronological, spatial, etc.) is being used to organize the paragraph. In addition, a key element of identifying a paragraph’s organization pattern involves identifying the transitions among sentences. In effect, one gets a better grasp of organizational patterns when one is called upon to identify the strategies in the paragraph itself that are used to create the organizational pattern. The doodling function of VoiceThread allows each student to demonstrate his or her ability to organize a paragraph and identify the organizational strategy. Students can use the doodling function to number each sentence in the paragraph in the order that it should appear. For example, the student doodling on the image in Figure 5 has identified the sixth sentence as the one that should go fourth in the paragraph. Please note that this sample paragraph comes from an out-of-print edition of the Scott Foresman Handbook.

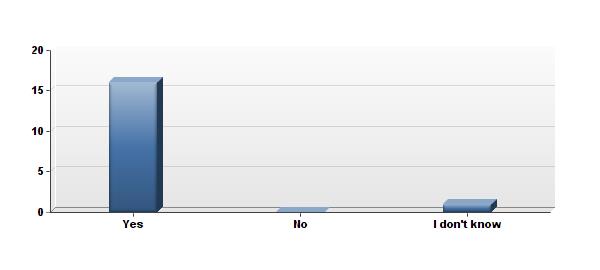

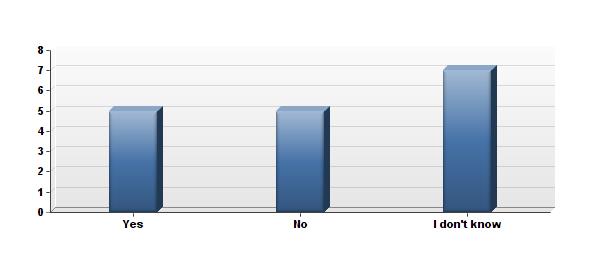

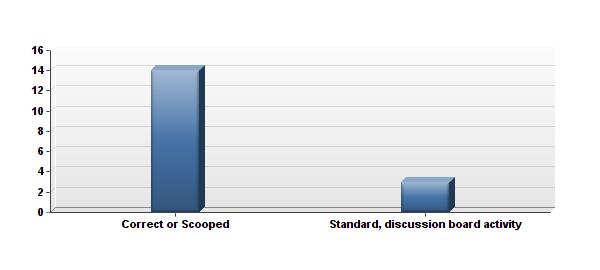

As can be seen, each game is a little different, but all involve the opportunity to scoop points. The opportunity to scoop points keeps students attentive to the assignments and aware of peers’ postings, which leads to a high level of interaction during these assignments. The clear goals of each assignment and each one’s direct tie to concepts under discussion mitigate complaints of busy work. And in evaluations, students referenced this activity as meaningful, and one student pronounced it “brilliant.” In a summer 2014 survey, 17 students responded to several questions about their experiences with VoiceThread in the online classroom. Sixteen out of seventeen said the exercise helped them to practices skills they learned in class. Only five of the seventeen respondents said they had used the game to study for an upcoming exam. However, all but two said they preferred the VoiceThread activity over a standard discussion board activity.

The results are illustrated below.

Do you think this activity helped you to practice skills/information that you are learning about in the class?

The qualitative analysis results in few general goals for online courses that will increase student engagement:

- Give students more options to comment and share the assignment.

- Give students an opportunity to “show off” their multimedia skills as they think about the assignment.

- Make the discussion more engaging by giving the students more freedom in how and what they share.

- Elevate the online discussion to the level of intellectual exchange.

- Foster “concept checkup” opportunities using collaborative interaction.

- Set clear goals and directions for each assignment.

- Create assessment and grading for each assignment.

- Give students something to gain by interacting with others in this assignment.

Quantitative Analysis and Discussion

The second course that was used to analyze the use of VoiceThread was an introductory programming logic course from Information Systems Department. The programming logic course is designed to teach students concepts and development of software applications. Students learn concepts of how to logically structure programs and develop actual applications. Quizzes are among the assessment tools in this course. There are seven quizzes; three of the quizzes are administered via VoiceThread using the “Correct or Scooped” structure discussed in the previous section. The remaining four quizzes are also administered online but in a traditional question/answer format.

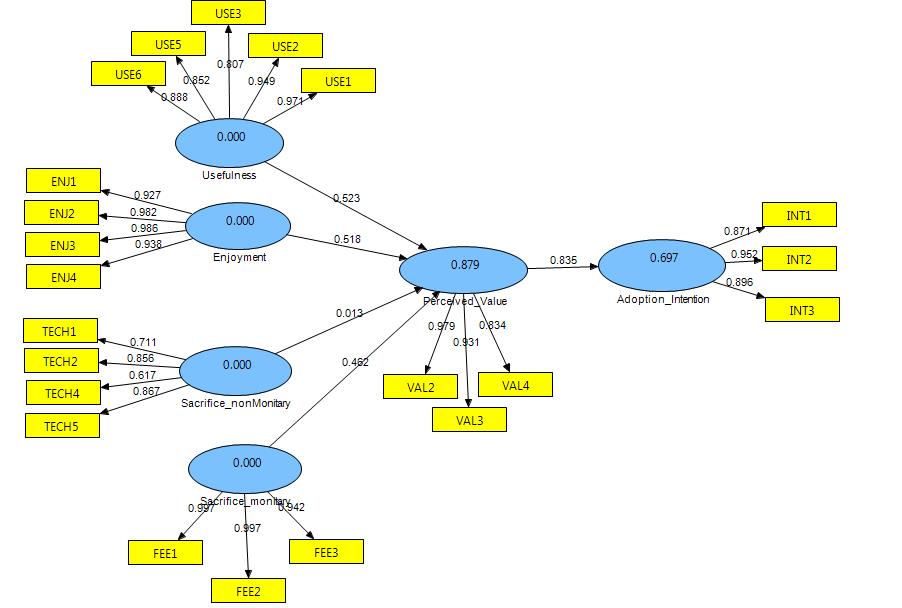

As part of the model analysis some indicators were dropped and the operational model with the final list of indicators is depicted in Figure 6. As shown in Figure 6, the final indicators for the constructs are five for usefulness, four for enjoyment, four for non-monetary sacrifice, three for monetary sacrifice, three for perceived value, and three for adoption intention. Indicators are questionnaire items asked in the survey. All indicators used in this study were defined and validated in prior studies.

All indicators loaded on their own distinct factor; i.e. the questions used to ask about each construct were distinct to that construct and did not cross-load on a different construct, confirming prior research validation that the survey questions were appropriate for each construct. A second level of confirmation that the survey questions were appropriate is performed using convergent validity. Convergent validity indicates the level to which the survey questions (indicators) are measuring a given construct. The statistical measure for convergent validity is meeting a factor loading minimum threshold of 0.6 for each indicator. As shown in Figure 6, all indicators show convergent validity with factor loading above the 0.6 level.

There are two conclusions that can be drawn from the operational model:

1. Adoption intention and perceived value of VoiceThread. The variance explained in adoption intention is 0.697, as shown inside the “adoption intention” construct; this means 69.7% of the variance of adoption intention for VoiceThread can be explained by the constructs used in the model. Similarly, the variance explained in perceived value is 0.879, as shown inside the “perceived” construct; this means 87.9% of the variance for perceived value of VoiceThread can be explained by the constructs used in the model. Variance explained above 50% is a strong indication for confirming a model, i.e. VoiceThread adoption intention and perceived value can be predicted by studying the technology usefulness, enjoyment, non-monetary and monetary sacrifice—the four independent constructs in the model.

2. Predictive ability of the constructs used in the model. The predictive strength of the constructs is shown by the path coefficients, i.e. the magnitude of the relationship between the constructs is reflected by the relative magnitude of the numerical number indicated on the path. Typically path coefficients above 0.2 are statistically significant. All path coefficients except for monetary sacrifice are above 0.2. This indicates monetary sacrifice did not contribute to this model, but all other constructs were strong predictors of the perceived value and adoption intention of VoiceThread. The significance of these relationships is further confirmed by the T-statistic. The T-statistic for all constructs, except the non-monetary sacrifice, were significantly high indicating a significance level of 0.001, i.e. 99.9% confidence level that the hypothesized relationships in the model are supported.

Conclusion

This research provides a structure for creating an engaging online classroom and provides empirical support showing the value of a collaborative tool: VoiceThread. The two research questions: (1) How does the instructor increase student engagement in an online course? and (2) How does the instructor evaluate the value of a collaborative online tool? are discussed using qualitative and quantitative methods, respectively.

Our qualitative research shows successful student engagement when the online course structure includes giving students options to comment and share the assignment, giving students opportunities to “show off” their multimedia skills as they think about the assignment, making the discussion more engaging by giving the students more freedom in how and what they share, elevating the online discussion to the level of intellectual exchange, fostering “concept checkup” opportunities using collaborative interaction, setting clear goals and directions for each assignment, creating assessment and grading for each assignment, and giving students something to gain by interacting with others in this assignment (gamifying the assignment).

We further confirm empirically that the online collaborative tool, VoiceThread, has value for students and increases their intention to adopt VoiceThread. Through this study, the researchers found that in both qualitative and quantitative research, VoiceThread adds value to the online classroom discussion, provided it is paired with a gaming aspect such as the Correct or Scooped game.

REFERENCES

Bomar, Shannon. “A Pre-Reading VoiceThread.” Knowledge Quest 37, no. 4 (2009): 26–27.

Bran, Ramona. “Do the Math: ESP+ Web 2.0= ESP 2.0!” Procedia-Social and Behavioral Sciences 1, no. 1 (2009): 2519–23.

Brunvand, Stein, and Sara Byrd. “Using VoiceThread to Promote Learning Engagement and Success for All Students.” Teaching Exceptional Children 43, no. 4 (2011): 28–37.

Davis, Fred D. “Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology.” MIS Quarterly, 1989, 319–40.

Davis, Fred D., Richard P. Bagozzi, and Paul R. Warshaw. “User Acceptance of Computer Technology: A Comparison of Two Theoretical Models.” Management Science 35, no. 8 (1989): 982–1003.

Harris, Kenya. “Multifarious Instructional Design: A Design Grounded in Evidence-Based Practice.” Teaching and Learning in Nursing 6, no. 1 (2011): 22–26.

Hew, Khe Foon, and Wing Sum Cheung. “Use of Web 2.0 Technologies in K-12 and Higher Education: The Search for Evidence-Based Practice.” Educational Research Review 9 (2013): 47–64.

Kim, Hee-Woong, Hock Chuan Chan, and Sumeet Gupta. “Value-Based Adoption of Mobile Internet: An Empirical Investigation.” Decision Support Systems 43, no. 1 (2007): 111–26.

Ng, Wan. “Can We Teach Digital Natives Digital Literacy?” Computers & Education 59, no. 3 (2012): 1065–78.

Siegle, Del. “Technology: Presentations in the Cloud with a Twist.” Gifted Child Today 34, no. 4 (2011): 54–58.

Stein Brunvand and Sara Byrd, “Using VoiceThread to Promote Learning Engagement and Success for All Students,” Teaching Exceptional Children 43, no. 4 (2011): 28–37.

[ii] Shannon Bomar, “A Pre-Reading VoiceThread,” Knowledge Quest 37, no. 4 (2009): 26–27.

[iii] Del Siegle, “Technology: Presentations in the Cloud with a Twist.,” Gifted Child Today 34, no. 4 (2011): 57.

[iv] Kenya Harris, “Multifarious Instructional Design: A Design Grounded in Evidence-Based Practice,” Teaching and Learning in Nursing 6, no. 1 (2011): 22–26.

[v] Wan Ng, “Can We Teach Digital Natives Digital Literacy?,” Computers & Education 59, no. 3 (2012): 1065–78.

[vi] Ramona Bran, “Do the Math: ESP+ Web 2.0= ESP 2.0!,” Procedia-Social and Behavioral Sciences 1, no. 1 (2009): 2519–23.

[vii] Khe Foon Hew and Wing Sum Cheung, “Use of Web 2.0 Technologies in K-12 and Higher Education: The Search for Evidence-Based Practice,” Educational Research Review 9 (2013): 47–64.

[viii] Hee-Woong Kim, Hock Chuan Chan, and Sumeet Gupta, “Value-Based Adoption of Mobile Internet: An Empirical Investigation,” Decision Support Systems 43, no. 1 (2007): 111–26.

[ix] Fred D. Davis, “Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology,” MIS Quarterly, 1989, 319–40; Fred D. Davis, Richard P. Bagozzi, and Paul R. Warshaw, “User Acceptance of Computer Technology: A Comparison of Two Theoretical Models,” Management Science 35, no. 8 (1989): 982–1003.

About the Authors

Dr. Solomon Negash is Professor of Information Systems and Executive Director of the MADLab Center (Mobile Application Development—MAD) in the Coles College of Business at Kennesaw State University. His research focuses on mobile applications, classroom technology, technology adoption, technology transfer, and outsourcing. He has published over a dozen journal papers, two edited books, several book chapters, and over three dozen conference proceedings. Dr. Negash has over 20 years of industry experience working as a manager, consultant, and systems analyst and 12 years as professor teaching at the graduate and undergraduate levels. He has served in several capacities including editor-in-chief of the African Journal of Information Systems; plant manager at a US manufacturing facility; strategic thinking and planning committee member at Kennesaw State University; coordinator of an international PhD program at Addis Ababa University, Ethiopia; founder and first chairman of the Ethiopian Information and Communication Technology advisory body; and founder of the Bethany Negash Memorial Foundation, a charitable organization which has delivered over 400,000 books and 3,000 computers to Ethiopian colleges and libraries. Dr. Negash is the recipient of the 2007 distinguished intellectual contribution award, the 2005 and 2007 distinguished e-Learning award, the 2007 distinguished graduate teacher award, and the 2010 Distinguished Service award from Kennesaw State University. Additionally, he received the International Goodwill and Understanding award from Soroptimist International.

Dr. Tamara Powell is the Director of the College of Humanities and Social Sciences Office of Distance Education at Kennesaw State University in Kennesaw, GA. She is an Associate Professor of English and affiliated with the African and African Diaspora Studies program at KSU. Her PhD work at Bowling Green State University in Bowling Green, Ohio focused on multiethnic American literature. A recent publication is “The Ubiquitous Basketball as Essence of Genius: Narrative Structure in Sherman Alexie’s ‘Saint Junior,’ ” published in the Journal of Ethnic American Literature in 2013. Dr. Powell created the “Build a Web Course Workshop,” a faculty development workshop that won the 2010 Sloan-C Award for Excellence in Faculty Development for Online Teaching. She is always interested in instructional technology, and she worked with colleagues to write “ ‘Which Technology Do I Use to Teach Online?’ Online Technology and Communication Course Instruction,” published in JOLT: Journal of Online Teaching and Learning 8.4 (2013).

'Increasing Student Engagement and Assessing the Value of an Online Collaboration Tool: The Case of VoiceThread' has 34 comments

October 12, 2023 @ 9:38 am paypal

great article

Secret Dating App Icons for iPhone

October 10, 2023 @ 4:29 am Fashion

nice post

Libiyi Women’s Beach Slippers

October 9, 2023 @ 9:06 am Paypal

great information

What Does NFC Tag Detected Mean on Cash App

September 15, 2023 @ 6:13 pm Info Line

Thanks for the insightful article

August 12, 2023 @ 6:47 am PHONES

nice post

Zanaco Mobile Banking Zambia App Review

Mwachangu Zambia Review – How to Use the Mwachangu Loan App

May 2, 2023 @ 9:53 am Mario Lazzatti

More articles like this is what we need

May 2, 2023 @ 9:51 am Juninho

Interesting takes that should be echoed more

May 2, 2023 @ 9:50 am Andrea

My colleagues would love this article very well

May 2, 2023 @ 9:48 am Lucas

More people need to see this

April 18, 2023 @ 8:08 am ambercash zambia

Nice post about student engagement

February 12, 2023 @ 5:27 pm tech

nice info, thanks

February 12, 2023 @ 4:54 pm net worth

nice article

September 19, 2022 @ 6:59 am Obi Emma

Thank you for this.

I really loved the section about the “Correct or Scooped” game.

August 12, 2022 @ 3:42 pm NECO

NECO 2022 Results is out

October 8, 2022 @ 5:48 pm tioyt

You now understand how much I adore your website and everything on it. Keep it up. It’s excellent work. excellent work; keep it up

August 12, 2022 @ 10:54 am damina

I like your post and also like your website because your website is very fast and everything in this website is good. Keep writing such informative posts. I have bookmark your website. Thanks for sharing

August 4, 2022 @ 5:46 pm waec result

Waec result is out online check here

August 4, 2022 @ 5:00 pm Moola

For bank and credit card review visit

July 16, 2022 @ 9:56 am recruitmentverge

Thank you. This was a great article.

July 16, 2022 @ 9:54 am Scholarshipverge

Awesome Thanks for sharing

July 5, 2022 @ 2:43 am MYNSFAS

This is an interesting article. Students find it difficult to put in work in an online courses. I have bunch of online courses in my system and i find it difficult to study them.

June 29, 2022 @ 9:34 am Benson

You used very remarkable first principle points to elucidate the goal of effective education. Great job!

June 15, 2022 @ 5:41 pm Waec result 2022

This result on this article is so educative

May 20, 2022 @ 5:09 am Eunice Eze

This was an interesting read. I think I will share it with mu local school board seeing as it might be relevant to us too.

Thank you, Dr. Negash.

April 16, 2022 @ 5:32 pm David

I’m not sure what the route coefficient for monetary sacrifice means. You say in the article that it is the only value below. 2 despite the fact that it is recorded as 0.462 in the diagram. Is it possible that the diagram contains an error? Is this an unintentional reversal? or am I going down the wrong road?

February 8, 2022 @ 8:31 am Medwealth

Very Effective article. Thanks for sharing. check my profile

January 4, 2022 @ 8:15 am Obinna Emmanuel

Thank you. This was a great article. I think I will cite it in a paper for a project I’m working on.

November 8, 2021 @ 10:19 am NECO Result

Thanks for the information

October 11, 2021 @ 8:21 am PEE

When i went through this article i found it more interesting and like wise it could be a material for a seminar / project work.

I need a permission from the author of this article, please can I use the whole of it (article) to work on my project?

Thank You in advance……

August 23, 2021 @ 4:30 am Uniuyo Student

Very Effective article. Thanks for sharing

June 22, 2016 @ 4:46 pm Alida

I am confused regarding the path coefficient for monetary sacrifice. In the article you state that it is the only value below .2 and yet in the diagram it is recorded as 0.462. Perhaps there is an error in the diagram? An accidental reversal? or am I looking at the wrong path?

April 5, 2016 @ 8:28 am VoiceThread – Technology

[…] I will try to learn how effectively use Voice Thread instead of regular online discussions. Here is the article that talks about VoiceThread. […]

May 29, 2015 @ 10:40 am Victor Ome Umukoro

When i went through this article i found it more interesting and like wise it could be a material for a seminar / project work.

I need a permission from the author of this article, please can I use the whole of it (article) to work on my project?

Thank You in advance……

May 31, 2015 @ 7:12 am Dr. Solomon Negash

Hi Victor,

Yes, you may use the article provided the appropriate academic citations and credits are included.

Regards,

~Dr. Solomon Negash