Riki Thompson, University of Washington Tacoma

Meredith J. Lee, Leeward Community College

Abstract

Changing digital technology has allowed instructors to capitalize on digital tools to provide audiovisual feedback. As universities move increasingly toward hybrid classrooms and online learning, consequently making investments in classroom management tools and communicative technologies, communication with students about their work is also transforming. Instructors in all fields are experimenting with a variety of tools to deliver information, present lectures, conference with students, and provide feedback on written and visual projects. Experimentation with screencasting technologies in traditional and online classes has yielded fresh approaches to engage students, improve the revision process, and harness the power of multimedia tools to enhance student learning (Davis and McGrail 2009, Liou and Peng 2009). Screencasts are digital recordings of the activity on one’s computer screen, accompanied by voiceover narration that can be used for any class where assignments are submitted in some sort of electronic format. We argue that screencast video feedback serves as a better vehicle for in-depth explanatory feedback that creates rapport and a sense of support for the writer than traditional written comments.

“I can’t tell you how many times I’ve gotten a paper back with underlines and marks that I can’t figure out the meaning of.”

–Freshman Composition Student

Introduction

The frustration experienced by students after receiving feedback on assignments is not unique to the student voice represented here. Studies on written feedback have shown that students often have difficulty deciphering and interpreting margin comments and therefore fail to apply such feedback to successfully implement revisions (Clements 2006, Nurmukhamedov and Kim 2010). A number of years ago one of us participated in a study about student perceptions of instructor feedback. The researcher interviewed several students, asking how they interpreted her feedback and what sorts of changes they made in response to the feedback (Clements 2006). Students reported that some comments were indecipherable, others made little sense to them, and some were disregarded altogether.

Clements (2006) suggests that the disconnect between feedback and revision is complicated by a number of factors, including the legibility of handwriting and editing symbols which sometimes read more like chicken scratch than a clear message. Students usually did their best to interpret the comment rather than ask for clarification. Other times, students made revision decisions based on a formula that weighed the amount of effort in relation to the grade they would receive. In other words, feedback that was easier to address gained priority, and feedback that required deep thinking and a great deal of cognitive work was dismissed. Sometimes these decisions were made out of sheer laziness. Other times students’ lack of engagement with feedback was a strategic triage move to balance the priorities of school, work, and home life. These findings motivated us to find more effective ways to provide feedback that students could understand and apply to improve their work.

We both rely upon a combination of written comments and conferences to provide feedback and guidance with student work-in-progress, but we find that written comments make it too easy to mark every element that needs work rather than highlight a few key points for the student to focus on. We often struggled to limit our comments to avoid overloading our students and making feedback ineffective, as research in composition studies shows that students get overwhelmed by extensive comments (White 2006). After years of using primarily written comments to respond to student papers, we were often frustrated by the limits presented by this form of feedback.

Wanting to intellectually connect with students and explore ideas collaboratively while reading a paper, we are often having a conversation in our own heads, engaging the text and asking questions. We experience moments of excitement when we read something that engages us deeply. We think, “Wow! I love this sentence!” or “Yes! I completely agree with the argument you’re making,” or “I hadn’t thought of it that way before.” We also ask questions: “What were you thinking here?” or “Why did you start a new paragraph here?” in hopes that the answers appear in the next draft. Unfortunately, written comments are often in concise, complex explanations that students find difficult to unpack. That is, the necessary supplemental explanation that students require for meaning-making remains largely in our heads, rather than appearing on student papers. Thus, we wanted to make the feedback process more conversational, less confusing, and less intimidating for students, especially in online classes.

In both of our teaching philosophies, our primary motivation as writing teachers is to help our students improve upon their own ideas by revising their writing and utilizing feedback from us and their classmates. Thus, we recognize that our feedback needs to be personalized and conversational in nature. We don’t want our feedback to be perceived as a directive, which we know results in students focusing all their energy on low priority errors rather than considering global issues. Instead, we want our feedback to inspire students to think about what they’ve written and how they might write it in a way that is more persuasive, clearer, or more nuanced for their intended audience. Moreover, we want their writing to be intentional; we don’t want students to think writing should be merely a robotic answer to an assignment prompt. With goals such as these, it’s no surprise that traditional feedback methods were deemed insufficient and wanting. We teach students that argumentation is about responding to a rhetorical situation–joining the conversation so to speak—and yet our written feedback was not effectively serving that purpose.

To remedy this problem, we experimented with screencasting technology as a tool to provide students with conversational feedback about their work-in-progress. Screencasts are digital recordings of the activity on one’s computer screen, accompanied by voiceover narration. Screencasting can be used by professors in any class to respond to any assignment that is submitted in an electronic format, be it a Word document, text file, PowerPoint presentation, Excel spreadsheet, Web site, or video. While using Screen Capture Software (SCS), we found that screencasting has most commonly been used pedagogically to create tutorials that extend classroom lectures.

Screencasting has been used as a teaching tool in a variety of fields, with mostly positive results reported, specifically in relation to providing students with information and creating additional avenues of access to teaching and materials. In the field of chemical engineering, screencasting has served as an effective supplement to class time and textbooks (Falconer, deGrazia, Medlin, and Holmberg 2009). A study of student perceptions and test scores in an embryology course that used screencasting to present lectures demonstrated enhanced learning and a positive effect on student outcomes (Evans 2011). Asynchronous access to learning materials—both to make up for missed classes as well as to review materials covered in class—is another benefit of screencasting in the classroom (Vondracek 2011, Yee and Hargis 2010). An obvious advantage for online and hybrid classrooms, this type of access to materials also creates greater access for brick-and-mortar universities, especially those that serve nonresidential and place-bound student populations. Research on screencasting in the classroom is limited, but so far it points to this technology as a powerful learning tool.

While most of the research on screencasting shows positive results for learning, such studies focus on how this digital technology serves primarily as a tool to supplement classroom instruction; no research has yet shown how it can be used as a feedback tool that improves learning (and writing) through digitally mediated social interaction. This study examines the use of and student reactions to receiving what we call veedback, or video feedback, in order to provide guidance on a variety of assignments. We argue that screencast video feedback serves as a better vehicle for in-depth explanatory feedback that creates rapport and a sense of support for the writer than traditional written comments.

Literature Review

Best practices in writing studies suggest that feedback goes beyond the simple task of evaluating errors and prompting surface-level editing. The National Council of English Teachers (NCTE) position statement on teaching composition argues that students “need guidance and support throughout the writing process, not merely comments on the written product,” and that “effective comments do not focus on pointing out errors, but go on to the more productive task of encouraging revision” (CCCC 2004). In this way, feedback serves as a pedagogical tool to improve learning by motivating students to rethink and rework their ideas rather than simply proofread and edit for errors. At the 2011 Conference on College Composition and Communication, Chris Anson (2011) presented findings on a study of oral- versus print-based feedback, arguing that talking to students about their writing provides them with more information than written comments.

The task of providing comments that students can engage with remains a challenge, especially when feedback is intended to help students learn from their mistakes and make meaningful revisions. Not only for composition instructors but also for any instructor who requires written assignments, providing students with truly effective feedback has long been a challenge both in terms of quality and quantity. Notar, Wilson, and Ross (2002) stress the importance of using feedback as a tool to provide guidance through formative commentary, stating that “feedback should focus on improving the skills needed for the construction of end products more than on the end products themselves” (qtd in Ertmer et al. 2007, 414). Even when it provides an adequate discussion of the strategies of construction, written feedback can often become overwhelming.

Written comments usually consist of a coded system of some sort, varying in style from teacher to teacher. Research about response styles has shown that instructors tend to provide feedback in categorical ways, with the most common response style focused primarily on marking surface features and taking an authoritative tone to objectively assess right and wrong in comments (Anson 1989). Writing teachers, for example, tend to use a standard set of editing terms and abbreviations, although phrases, questions, and idiosyncratic marks are also common. According to Anson (1989), other teachers used feedback to play the role of a representative reader within the discourse community, commenting on a broad range of issues, asking questions, expressing preferences, and making suggestions for revision. Comments can be both explicit–telling students when an error is made and recommending a plan of action–and indirect, implying that something went well or something is wrong. In this way, indirect feedback seems a bit like giving students a hint, similar to the ways in which adults give children hints about where difficult-to-find Easter eggs might be hidden in the yard. Although the Easter egg hunt is intended to challenge children to solve a puzzle of where colorful eggs might be hidden from view, adults provide clues when children seem unable to figure out the riddle. In other words, adults give guidance when children seem lost, similar to the ways instructors give guidance to students who seem to have veered off track.

Written feedback tends to be targeted and focused, with writers filtering out the extraneous elements of natural speech that may further inform the reader/listener. All communication—whether it be written or spoken—is intrinsically flawed and problematic (Coupland et al. 1991), such that the potential for miscommunication is present in all communicative exchanges. Thurlow et al. (2004, 49) argue that nonverbal cues such as tone of voice usually “communicate a range of social and emotional information.” Everyday speech is filled with hesitations, false starts, repetitions, afterthoughts, and sounds that provide additional information to the listener (Georgakopolou 2004). Video feedback allows instructors to model a reader response, with the addition of cues that have the potential to help students take in feedback as part of an ongoing conversation about their work instead of a personal criticism. We recognize that this claim assumes that an instructor’s verbal delivery is able to mitigate the negativity that a student may interpret from written comments and that the instructor models best practices for feedback regardless of medium.

Serving as a medium that allows instructors to perform a reader’s response for students, digital technology can be an effective tool to continue the conversation about work-in-progress. By talking to students and reading their work aloud, instructors can engage students on an interpersonal level that is absent in written comments. It’s about hearing the reader perform a response full of interest, confusion, and a desire to connect with the ideas of the writer. This type of affective engagement with student work is something that students rarely see, hear, and sense—the response from another reader that’s not their own. Veedback offers students an opportunity to get out of their heads and hear the emotional response that is more clearly conveyed through spoken words than writing.

Thus, audiovisual feedback has the potential to motivate students and increase their engagement in their own learning, rather than just to assess the merits of a written product or prompt small-scale revision. Holmes and Gardner (2006, 99) point out that student motivation is multifaceted within a classroom and point to “constructive, meaningful feedback” as characteristic of a motivational environment. Changing digital technology has allowed for instructors to capitalize on new or evolving digital tools in creating that motivational environment.

As universities move toward hybrid classrooms and online learning and consequently make investments in classroom management tools and communicative technologies, communication with students about their writing is also transforming. Instructors in all fields are experimenting with a variety of tools to deliver information, present lectures, conference with students, and provide feedback on written and visual projects.

Experimentation with digital technologies in traditional and online composition classes has yielded fresh approaches to engage student writers, improve the revision process, and harness the power of multimedia tools to enhance student learning (Davis and McGrail 2009, Liou and Peng 2009). By employing screencast software as a tool to talk to students about their work-in-progress, we are adding another level of interpersonal engagement—palpably humanizing the process.

Our Pedagogy

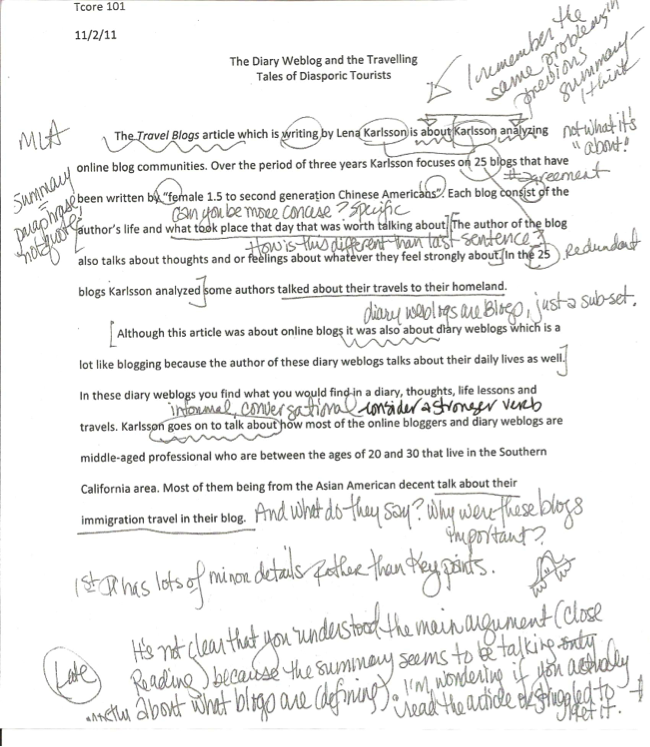

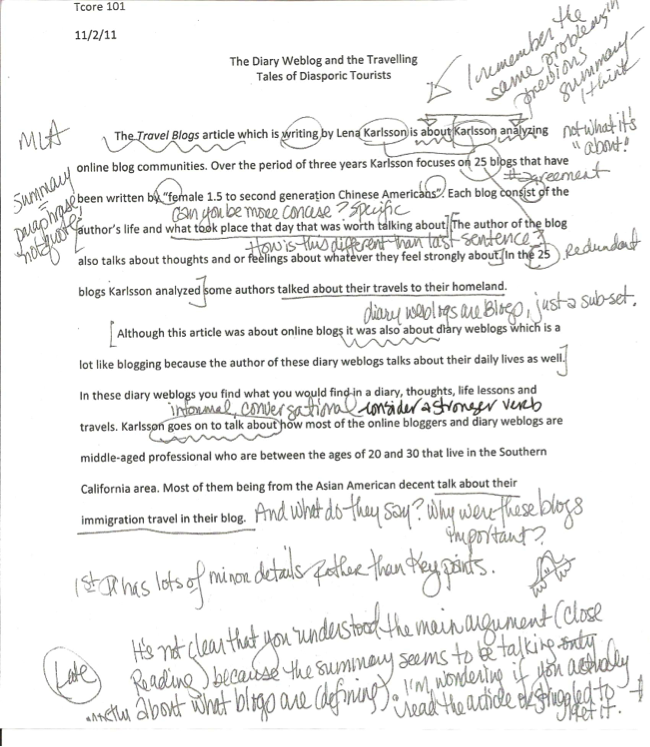

Because inquiry and dialogue are foundational to our pedagogical practice, writing workshops, teacher-student conferences, and extensive feedback in which we attempt to take on the role of representative reader are common in our courses. Although we each work hard not to be the teacher who provides students with feedback that they don’t understand, more often than we would like to admit, we know that we too are sometimes those teachers who use underlines and marks that make little sense to our students, as this paper with written comments demonstrates (Figure 1).

Figure 1. A student paper with written comments.

Even after informing students of our respective coding system, many students remain confused. This example is one instructor’s chart of editing marks given to students with their first set of written feedback (Figure 2).

Figure 2. A chart of editing marks given to students with their first set of written feedback.

We know students are confused by written comments because some students come to office hours and share their confusion over some of our statements and questions. Many confirm that they don’t really know what to do with the comments or how to make the move to improve their work or transfer their learning to the next assignment or draft. Students’ difficulty in decoding comments may be based on their expectations of feedback as directive rather than collaborative and conversational. Moreover, students’ prior (learned) experiences with feedback may color the way students read and respond to comments. That is, many students expect directive feedback and believe that the appropriate response is merely to edit errors and/or delete sections that are too difficult to revise. Thus, students feel confused (and frustrated) when a comment does not yield a specific solution that fits into the paradigm of “what the teacher wants.”

Although we both require in-person student conferences (or in a digitally mediated form via phone, Skype, or Blackboard Collaborate) as one of the most important pedagogical tools to improve student writing, we acknowledge the limitations of conferences as the primary means of giving feedback. Time is the most obvious obstacle. While allowing the most personalized instruction for each student, one-on-one student-teacher conferences are labor-intensive for the teacher. Conferences are usually held only twice in a sixteen-week semester (or ten-week quarter) and are characterized by a non-stop whirlwind of twenty-minute appointments. For those teaching at nonresidential university campuses and community colleges, requiring students to schedule a writing conference outside of class time is even more challenging as most are overextended with jobs and family responsibilities. The most important feature of writing conferences is the dialogic nature of it–the conversation about the work-in-progress and the collaborative planning about how to make improvements. Acknowledging both the effectiveness and limitations of face-to-face conferencing, we considered alternatives to the traditional writing conference.

Initially, one of us experimented with recording audio comments as a supplement to written comments and an extension of the writing conference, but was not satisfied with the results. This method requires the instructor to annotate a print-based text (which is problematic for online courses and digitally mediated assignments) in addition to creating a downloadable audio file. The separation of the annotated text from comments can create logistical problems for students finding and archiving feedback and create extra work for the instructor providing it.

When we discovered screencasting, we began to experiment with this digital tool as an alternative form of feedback. We each employed Jing screen-recording software to record five minutes of audiovisual commentary about a student’s work. This screencasting software enabled us to save the commentary as a flash video that could be emailed or uploaded to an electronic dropbox. This screenshot shows what appears on the screen for students when they click a link to view video feedback hosted on the Dropbox site.

Opportunities and Obstacles

New methods of delivering instruction, such as in hybrid or online courses, create a need to solve the feedback dilemma in a variety of ways. We believe a key component to effective feedback is the collaborative nature of conversation built upon a rapport cultivated in “normal” classroom interaction. However, with limited (or no) face-to-face time between instructor and student (or between student and student), creating a collaborative and conducive environment for writing is a challenge as the tone of the class is often set by the “performance” of the instructor during class. In online environments, students cannot see or hear their instructors or their classmates, which can potentially stifle the creation of a positive learning community. The face-to-face experiences of the traditional classroom allow students to develop rapport with a teacher, which can mitigate the feeling of criticism associated with formative feedback.

Without these face-to-face experiences, students in online classes are more likely to disengage with course content, assignments, and their instructor and classmates. This increased tendency to disengage is evidenced in the lower completion rate for online classes. According to a Special Report by FacultyFocus, “the failed retention rate for online courses may be 10 to 20 percent higher than for face-to-face courses.” And according to Duvall et al. (2003), the lack of engagement by students in online courses is linked to the instructor’s “social presence.” They state that “social presence in distance learning is the extent that an instructor is perceived as a real, live person, rather than an electronic figurehead.” Research shows that the relationship between student and teacher is often an important factor for retention (CCSSE – Community College Survey of Student Engagement n.d., NSSE Home n.d.); this relationship is a compelling argument for why we should look for socially interactive ways to respond to our students’ work.

While multimedia technology has allowed instructors to create more “face time” with students in an online class, technological savvy does not automatically translate into more social presence. While we would agree that any use of audio/video formats in the online class contributes to creating a learning community, video lectures are not personal in the same way that face-to-face lectures are not personal. In providing feedback on individual students’ writing, we are engaging in a conversation with our students about their own work—a prime opportunity to personalize instruction to meet student needs (also called differentiated instruction).

Logistically, screencasting has its challenges, such as those we encountered—additional time at the computer and a quiet place to record the videos—but we both discovered ways to mitigate those challenges. For example, one author found that this medium relegates the instructor to a quiet space and the other experienced limited storage capacity on her institution’s server. The first author discovered that a noise-cancelling headset allowed her to be mobile while using this feedback method. And the second author had to create alternative means of delivery and archiving by giving students the option of receiving video files via email, downloading and deleting files from the dropbox, or accessing videos via Screencast.com, which is not considered “private” by her institution.

Initially, the process was time-consuming because it was difficult to get out of the habit of working with a hard copy; we each initially wrote comments or brief notations on a paper (or digital) version as a basis for the video commentary. Keeping to the five-minute time limit was also a challenge, but the time limit also helped us to focus on the major issues in students’ writing rather than on minor problems. Perhaps most importantly, as we have become accustomed to the process, it takes us less time to record video comments than when we started using screencasting for feedback. Moreover, positive student response has encouraged us to be innovative in addressing the drawbacks.

Veedback allows instructors to move the cursor over content on the screen and highlight key elements while providing audio commentary as shown in this response paper. These two samples (a response paper [Video 1] and an essay draft [Video 2]) show how instructors can take advantage of the audiovisual aspects of screencasting to engage students in learning.

Video 1 (Click to Open Video). The instructor highlights key elements while providing audio commentary on a response paper.

Video 2 (Click to Open Video). A student essay.

Video 2 (Click to Open Video). A student essay.

After providing commentary within a student paper, this sample shows how instructors discuss overall strengths and weaknesses by pasting the evaluation rubric into the electronic version of the student essay and marking ranges (Video 3).

Video 3. The instructor has pasted the evaluation rubric into the electronic version of the student essay and marked ranges.

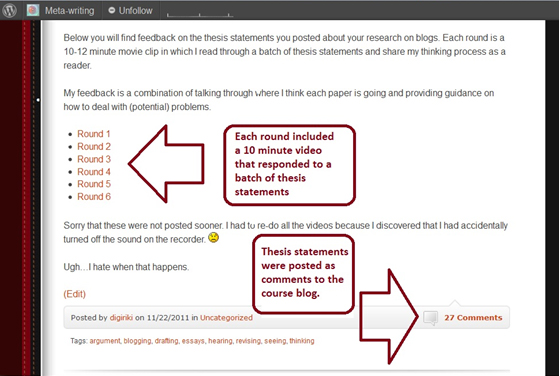

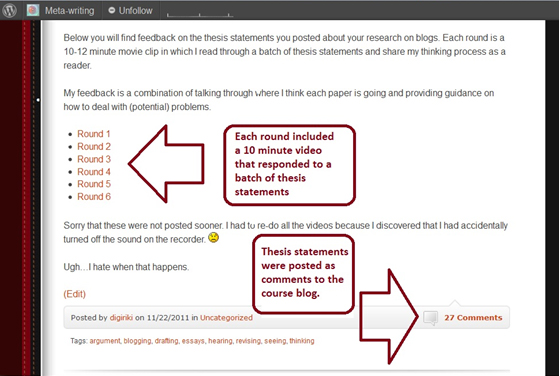

One of the many ways in which we used screencasting was to give feedback about work-in-progress that was posted to online workspaces, such as a course blog or discussion board. In this case, students posted drafts of their thesis statement for their essay on the blog and we responded to a group of them in batches and linked to the feedback on the course blog (Figure 3).

Figure 3. The instructors responded to thesis statements in batches and linked to the feedback on the course blog.

This method gave students access to an archive of feedback through the course blog and allowed for an extension of in-class workshops about work-in-progress to help students focus their research essays.

This snippet of one of the ten-minute videos mentioned above shows how one of the authors uses the audiovisual medium to show students how their writing may be seen and heard simultaneously (Video 4).

Video 4. A snippet of a video response to student work.

We have also found veedback to be especially useful for presentations because screencasting software allows us to start a conversation about the impact of visual composition and to manipulate the original document to present alternatives. In this particular sample veedback, the instructor used a sample presentation for an in-class workshop and ran screencasting software to provide an archive of notes that students could access when they were ready to revise (Video 5).

Video 5. A sample veedback presentation.

Methods

Screencasting was used in five sections of college-level writing courses by two instructors. Students from two sections of one author’s research and argument course were surveyed about screencasting feedback on essay drafts and PowerPoint presentations. In the second author’s three online sections of a research and writing course, students were informally asked about the use of veedback, and one online section was surveyed. The screencasts were produced on PCs using Jing software to create individual Flash movie files to be shared and posted to the classroom management system for student access. Both instructors also used screencasting as an extension of classroom lectures by offering mini-workshops on specific aspects of writing and providing tutorials for assignments and software use. Veedback was used instead of written comments, not in addition; in other words, assignments that got veedback didn’t get written comments—only some highlighting or strikethrough font in the file versions that were returned to them. We employed a color-coding system to differentiate between types of comments. For example, yellow highlighting may signal grammatical errors and green highlighting marks problems with content or interpretation as shown inthis example of veedback on an annotated bibliography assignment.

Students in the first author’s classes were asked to fill out an optional, anonymous, web-based questionnaire that would provide feedback about the course. Along with questions that asked students to reflect on their ability to meet the learning objectives for the course, the midquarter surveys also included specific questions related to particular assignments, activities, or teaching technologies that were added to the course. During that quarter, this author added an additional question, eliciting a short response of 500 words maximum, targeted to student perceptions of using videos as a method of feedback. The questionnaire prompted students to speak about their experiences with Jing videos for the two particular assignments in which it was used and to specifically address whether it was beneficial (or not) to their learning. The final question was “Please tell me about your experience getting feedback through Jing screen capture videos on a response paper and your presentation. How did it improve your learning (or not)?”

The data set for this survey is limited as it was elicited from two sections of the same 200-level writing course at one institution, with a maximum potential of 40 respondents. Thirty-two students took the online survey, 22 responded to the short answer questions about Jing, and 3 responded about digital classroom tools other than Jing. An additional data set was elicited from one section of a similar 200-level course at a second institution, with a maximum potential of 16 respondents. All 11 students who participated in the survey provided short answer questions about the veedback. Six respondents also commented on the use of videos for instruction. Thus, the data used in this paper comes from 30 short answer responses which were analyzed using content analysis. A number of key themes emerged and are discussed below. Most students who responded about Jing were extremely positive and found it beneficial to their learning. A few students, including those who found it beneficial, spoke of hearing and seeing through this digital tool as enhancing learning.

With only two out of 30 students stating a preference for comments “written down,” Jing comments received rave reviews as a form of feedback that aided student learning. Student preference for this type of feedback demonstrates how important it is that teachers deliver feedback employing multiple modes of delivery, combining the auditory, visual, and kinesthetic. Many spoke directly to the importance of auditory feedback as a key factor that contributed to their learning, and others claimed that the auditory in combination with the visual made the difference. Many students implied that the auditory explanations, coupled with the visual representation of their essay, gave them enough information to make meaningful revisions and apply feedback.

Students overwhelmingly included statements like “I like the Jing screen capture videos a lot” and “I think the Jing videos are very helpful.” Some students compared this video feedback form to traditional written comments, focusing on the negative side of the written comments rather than fully explaining the positives of the new form. “It felt as if I was talking with them – a much more friendly review rather than harsh critique.” In these comments, student preferences were implied and, therefore, were analyzed for meaning.

Can You Hear Me Now? Can You See It Now?

Inherent in the student-teacher relationship is a power differential in which teachers have more power and the student is somehow deficient and in need of correction. Students expect correction from teachers, not dialogue about their work. Oftentimes, tone of voice is obscured in written comments, forcing students to imagine the teacher in their head. This imagined teacher often sounds harsh and punishing. For example, we might ask questions in our margin comments that are indeed questions. While we might be looking for further explanation or description, students might read these questions as rhetorical, not to be answered, flatly stating that they made some unconscionable mistake that should not appear in a future version or assignment. Anything written in the margins is the “red marker that scolds” (White, 2006). Using one’s actual voice makes the tone of voice apparent. Audio feedback erases the red pen, and replaces it with the sound of a human conveying “genuine” interest in the ideas presented. By giving veedback, we are able to use a conversational tone to talk about writing with students. We are able to share how their writing sounds and offer a variety of options.

Students overwhelmingly pointed to auditory feedback as beneficial to their learning. “Hearing” what the teacher was saying was the most important reason that screencasting was found to be such a successful feedback tool, with many students stating a preference for hearing someone’s voice going through their paper.

“Being able to hear your explanations was very helpful.”

“The fact that you are hearing somebody’s voice instead of reading words on a piece of paper.”

“Instead of just writing comments it helps hearing the feedback. It helps a lot with knowing what specific things to work on.”

The feedback may be perceived as friendly because students can hear tone of voice, recognizing that we as teachers are encouraging them and not criticizing them. We surmise that students may be gaining a way into the conversation because they hear us talking with them about writing, not preaching or using teacherly discourse.

In commenting on veedback, students pointed to more than just the audio component as valuable to learning; for some, it was the combination of hearing feedback while simultaneously seeing the site where ideas may be re-imagined. These comments pointed to the importance of learning through multiple modes of delivery simultaneously, specifically audio and visual.

“I liked being able to hear you and see my paper at the same time.”

“It’s great to be able to get the feedback while watching it being addressed on the essay itself.”

“It’s one thing to just read your instructors feedback but to be able to see it and understand what you are talking about really helps!”

“I can see and follow the instructor as she reads through my writing with the audio commentaries. It helps me to pin-point exactly what areas need to be corrected, what is hard to understand, which areas I did well on, and which areas could be improved.”

Some students showed metacognition about learning preferences, judging the tool as beneficial to them specifically because they believed themselves to be visual learners who benefited from “seeing” what was being discussed. Reproducing discourse about learning styles, these students took on the identity of self-aware learners.

“This way seemed to be very good for visual learners like myself.”

“I like the capture videos. I’m a visual person.”

Making Connections

A number of students described their confusion and frustration after receiving feedback through traditional methods, demonstrating the challenges of making connections between feedback and learning goals. Negative experiences with written feedback were contrasted with previous positive responses to veedback.

“I can’t tell you how many times I’ve gotten a paper back with underlines and marks that I can’t figure out the meaning of.”

“Sometimes when you receive a paper back half of it is decoding what the teacher said before even seeing what was commented on.”

Despite the fact that (written) feedback is intended to communicate important information to students, the end result is often quite the opposite; students feel frustrated, disempowered, and unable to take the necessary steps to apply the comments.

Students noted that veedback simulates the student-teacher conference. Although this form of feedback is only one side of the conference, from teacher to student, the conversational nature of the feedback is clear. Students picked up on this intention, calling veedback an “interactive” form of feedback that is available beyond office hours (24/7) in comparison to a one-to-one writing conference with the teacher.

“it’s like a student-teacher one-on-one conference whenever I can get the time. Very helpful.”

“I really love the Jing screen capture videos that you have given as feedback. It’s very interactive and has helped me a lot. Thank you.”

“It helped my learning by answering questions I had about my writing.”

“Video feedback helped me to better improve my work because it was almost like a classroom setting that allowed the teacher to fill in the interaction gaps without actually having an in-class setting. Not only that, the information could be replayed repetitively, allowing me to review them and reflect on them once I need help with my work.”

While veedback does not allow students to ask questions as they would in a face-to-face, phone, or video conference, hearing the voice of the teacher going through the paper does give students the sense that they can ask more questions because it establishes a personal connection and rapport, creating a sense of availability.

Veedback does more than allow teachers to create a more personal mentoring connection with students; it allows us to take advantage of digital technologies–often thought to dehumanize interaction–to personalize instruction beyond the classroom. Unlike with written comments that rely upon brief descriptions, many students noted that video comments improved their learning primarily because teachers provided deeper explanation.

“I think the Jing videos are excellent because they help me understand a lot better as to what I need to revise. They are a lot better and more helpful than regular comments.”

“It did help my learning, i was able to understand what i was doing wrong, and how to fix it.”

“I think I received more detailed feedback than I might have from written comments.”

“I like it better than normal comments because I can hear your thought process when you are making a comment so it is easier to understand what you’re trying to say.”

Students stated that explanations within video feedback made the thought process of the reader visible, allowing them to identify problems. Thus, veedback provided students with greater guidance about how to improve. One of the second author’s online students stated that veedback “felt like you were explaining it to me,” not just pointing out mistakes. In this way, veedback engages the student in ongoing learning rather than grade justification. Moreover, veedback encourages a response and encourages revision as a re-vision (seeing again), not as just changing to whatever the teacher wants.

It is important to clarify here that it is the audio part of veedback that allows students to hear tone, which is a difficult skill for many students. Moreover, the medium of audio comments encourages students to think of feedback as a conversation. Inexperienced or less experienced (student) writers tend to conflate medium with tone, register, purpose, etc. That is, students often perceive written comments as directive—even when these comments are phrased as questions to consider or presented as guidance for revision. What veedback allows is for instructors to convey tone in both what they say and how they say it, thereby increasing the likelihood that students will understand our comments to be part of an ongoing negotiation between the meaning-making a reader enacts and the intended meaning writers attempt to create. While it is possible to transcribe spoken comments into written form, we posit that it is in hearing our voices that students are engaged in the conversation.

Accessing and Applying Veedback

The problem with traditional margin comments isn’t necessarily in the marks themselves, but in the disconnect between what teachers communicate and how students interpret that feedback. Teachers comment on assignments in hopes of reaching students by providing feedback about what worked well (if a student is lucky) and what went wrong. Feedback is frequently given merely as a form of assessment–justification for a grade. Regularly, feedback is provided after an assignment is completed and with the belief that a student will be able to transfer knowledge about what he did wrong and what he needs to do right the next time. Students are expected to fill in the gaps of their own knowledge. If students are lucky, feedback is given on early attempts (practice activities or essay drafts) to provide guidance, helping those who have lost their way to find their way back to the path.

Although most reviews of screencasting in the classroom have been positive, a recent study in the field of computer science found no significant effect of screencasts on learning (Lee, Pradhan, and Dalgarno 2008), and another that uncovered pedagogical challenges of integrating screencasting (Palaigeorgiou and Despotakis 2010). These critical reviews help us to see that this technology is not a panacea. Like other learning technologies, many of us are quick to see the benefits without fully assessing the problems they present for learners. Many of the problems faced by the computer science students in the first study, such as access, speed, and uncertainty about how to use the tool, were also experienced by our writing students.

Although there are increasing expectations—for instructors as well as students—to use digital tools, sometimes there are additional obstacles based on students’ lack of digital literacy in new media that go beyond typical social networking and entertainment-based tools. The free version of Jing creates SWF files that require a Flash player to open and often requires students to specify which program to open the file with. For the click-and-open generation, this has proved to be a challenge. Alternative software programs include options to save video files in the MP4 format, which can be more easily opened or played on other media devices (such as iPods). However, MP4 files are larger than SWF files, which presents other problems for downloading and/or uploading.

Technological difficulties were one of the primary obstacles to using video feedback. Students participating in the survey overwhelmingly liked veedback, but some complained of difficulties accessing and/or using the technology. Despite written instructions and campus resources providing students with help using academic technologies, two of the nineteen respondents said that they didn’t even know how to get into the videos. Because the survey was anonymous, the instructor remained clueless about which students had problems with access.

“Jing feedback videos and [Dropbox] comments still do not work on my end. I have talked with tech guys and they can’t figure it out. I can’t find out how I did and ways to improve my writing.”

“I like the videos but they were really hard to get them to work.”

“Sometimes it’s hard to open the videos.”

“I have no clue what Jing Feedback Video is and if I got a comment back it may have not opened because I tried to open some of the comments you left, but they would not open for me.”

“I think all the tech we use in class is great, but I have to teach myself how to use it :)”

The technological problems faced by these students resemble the difficulties faced by students unable to decipher the comments on the written page. That is, the technology acted as a barrier between our students and the conversation we tried to enact in written comments—both marginal and end comments—in the same way that written comments themselves are a barrier to the rich conversation that they are meant to convey. Until they asked for help or clarification both groups of students—those with technological problems and those with written comments–remained in the dark, unable to access the feedback in any useful way.

After assessing video feedback in our early classes, we were surprised to learn that technological issues were not always the obstacle to learning. In fact, the obstacle was students’ difficulty understanding how to utilize the feedback in their revision process. Although only two of the respondents stated a preference for written feedback, the complaint brings to light important issues of how students access and apply feedback to make improvements to their work.

“personally i don’t like the jing videos. i’d rather have the comments written down so that I can quickly access the notes and not have to keep track of just where in the video a certain comment is.”

“Written feedback helps more because I get to see the description and review it again if I need to. It is more easier for me to see it written out than video”

The complaint about video feedback in this context can be compared to the specific problems described in the studies about computer science courses (Lee et al. 2008, Palaigeorgiou and Despotakis 2010). It is apparent from these two comments above that the students’ revision practices operate within print-based culture. That is, written feedback is a norm within education and students have background knowledge and a repertoire for working with this mode of feedback, which consequently creates a perception that working with written feedback is easier (even if it is not). Some students, therefore, feel frustrated by unfamiliar modes of feedback and resist new revision practices that require learning new strategies to engage with feedback. While it is not uncommon for new technologies to be resisted when they require some adaptation, students in other contexts show a propensity to develop strategies to overcome these challenges. Thus, continuing research needs to evaluate whether the potential difficulties of implementing veedback outweigh the benefits for learning.

Through this study, we found that students need instruction on strategies for interacting with written and digitally mediated forms of feedback before they can deeply engage in the revision process. Proposed solutions to improve student learning with video feedback include teaching them how to read and apply feedback, not unlike the ways in which we teach them how to interpret the comments we put on a paper. We suggest that teachers encourage students to take advantage of the video format by re-watching sections and pausing when necessary to “digest” comments, as well as teaching them how to use feedback. We also recommend creating tutorials for students to demonstrate how to annotate a “hard copy” of the draft while watching the video, including highlighting and circling key points, time-stamping the draft to correspond with important places in the video, interpreting video feedback, and paraphrasing teacher comments in the margins. When students write their own comments, they do so in terms they understand and use writing to make sense of their own ideas through the act of rephrasing, reworking, and revising. Students already do translation work of digesting feedback during class, student-teacher conferences, and when they sit down to revise their work. What is valuable about student comments on their own work is that in that moment students are actively engaging in the process of revision (and learning).

Conclusion

Even when students understand what we are saying in our comments, they often don’t know how to reconceive the structure of their writing and change it (that is, they don’t understand how to reconfigure their ideas in their own voice). Many students continue to use templates and try to fill in the blanks, rather than see the model and then use the comments to make decisions about the types of revisions that can be made. In the service of learning, finding richer ways to teach students to engage with the work is of the utmost importance. It is our contention that students should be taught how to apply feedback to improve their work. Feedback that engages multiple learning styles while providing deeper explanation offers the possibility of increased student learning in a variety of higher education contexts.

Screencasting allows instructors to provide students with in-depth feedback and/or evaluation. With response papers and short written assignments, veedback allows the teacher to zoom in and highlight portions for discussion while scrolling through the document. With visually-oriented work (e.g., art work, Web sites, and PowerPoint presentations), using the mouse to point at key elements, instructors can talk about the impact of the student’s choices. We suggest that instructors be mindful of time and create multiple videos if there is a need for extensive feedback. Conceivably, students can view each of the videos at different times, even on different days. It is debatable how long web-based videos should be (Agarwal 2011, Scott 2009, SkyworksMarketing 2010), but the need for concision and clarity remains vital for both the student and the instructor. We also recommend instructors inform students if only certain types of issues will be discussed in a particular feedback session.

Based on our pilot study, the majority of students perceived that they understood video comments in a more meaningful way than written comments. Veedback can be used to perform the “confused reader” instead of the “finger-wagging critical teacher.” A margin comment that says “this is awkward” is different than hearing it read aloud from a real reader. The audio portion of veedback allows for communication that is conversational. In other words, teachers can speak the student’s language with veedback in ways that are absent in written comments. When teaching multilingual speakers, teachers may find that reading sentences aloud models Standard English and possible alternative forms that are commonly spoken. Another way to consider using veedback is to give students a sense of a reader’s experience, presenting alternatives through visual imagery and analogies.

We can see that video feedback is effective in terms of engagement with the revision process because we have noticed that students responding to video feedback appeared to attend to big picture issues, making global revisions rather than merely edits to surface level errors. With video feedback, students hear what is confusing about a sentence (rather than just a phrase identifying the error type) and therefore are more willing to attempt revision. Video feedback provides an opportunity to elaborate on problems in writing assignments, which gives students more direct guidance about how to solve the communication problem.

Although students have responded positively to this multimodal teaching tool, additional studies comparing revisions that responded to written feedback and video feedback are needed to investigate specifically what it is about veedback that is so compelling. Student interaction with and application of veedback requires further investigation. Furthermore, assumptions that the current generation is more audio/visual-oriented, a claim that has yet to be proven, may create external pressures for teachers to incorporate digital media into their teaching before research proves its effectiveness. Debates about pedagogy and technology are intricately tied to these assumptions, which must be interrogated. The question remains whether veedback is in fact more effective in improving student performance, or if it is merely student perception because “it’s not your grandfather’s Oldsmobile.” That is, not only are we not using the scolding red pen, but we are also not using any of the traditional feedback methods with which students may have had prior negative experiences.

While redesigning e-learning pedagogy should yield improved student learning, the question of how to measure outcomes will likely remain a source of debate. Although studies have found subtle differences in the impact of technology on student learning, variation in study types and research methodologies continue to leave more questions than answers about the effectiveness of digitally mediated modes of instruction (Wallace 2004), with alternate modes of instructional delivery showing “no significant difference” in student outcomes (Russell 2010). Rather than assessing the effectiveness of e-learning tools like veedback as measured by improved grades, drawing upon the Seven Principles for Good Practice in Undergraduate Education to examine “time on task” (Chickering and Ehrmann 1996) would provide a better indicator of student engagement. We propose that further research utilizing digital tools like Google shared docs would provide an avenue to review writers’ revision histories. This would allow for an examination of the types of revisions students produce in response to different feedback modes during time on task and to garner information about how students are engaged in revision.

We argue that assessing video feedback in terms of performance or most effective mode of delivery would miss the most important point of what our research is attempting to propose. It seems most useful to answer this question: is it fruitful to deconstruct the idea of “engagement in the revision process” by discussing “engagement” and the “revision process” on their own terms? Although there are other ways to assess engagement in the revision process, we believe that students’ attitudes about engaging with feedback provide a wealth of data about affective engagement in the revision process, which gets us closer to understanding what makes our students motivated and, thus, invested in their own learning. While scholars continue to debate about effective ways to motivate students, we propose that using veedback can be an effective way to address the affective component in motivating students. That is, students who are invested in the interpersonal relationship with their instructor/reader are likely to engage in more extensive and/or intensive revision and, consequently, learn at deeper levels.

One of the shortcomings of our study–the fact that our data on student attitudes cannot be compared to writing samples because the survey tool elicited anonymous responses–highlights the challenges of assessing the impact of video feedback on student learning. In our case, the use of anonymous surveys to elicit honest responses conflicted with a desire to triangulate data, leaving us with more answers about students’ perceptions about their own engagement with feedback than proof of whether students who claimed that veedback improved their learning did in fact make improvements.

In courses that teach skills acquisition through a cumulative drafting process, a number of variables at play further trouble the ability to assess the effectiveness of this particular tool. In writing intensive courses, for example, we might question whether improvement in skills from an early draft to a later draft is a product of the feedback method specifically; when assessing improvement in a course that aims to improve skills over the course of a term, supplemental instruction during class (or through online tutorials) and the cumulative effect of skills and knowledge gained between drafts are likely to skew the results. In addition, improvement in the final product (in the form of a revised draft) can differ widely across the data sample, in terms of both classroom dynamics and individual student motivation, background knowledge, ability, and commitment to the course.

Future research that attempts to mitigate some of these variables and triangulate the data may provide a more satisfying answer about the effectiveness of veedback. For example, an option that would allow for a comparison between feedback forms within one class is to use both forms to respond to the same type of assignment (e.g. summaries for two different articles) within one class. While this method may eliminate one variable by using the same students, other problems may arise, such as whether the form used later in the quarter may provide better results on account of cumulative learning or whether one of the assignments produced inferior results on account of the content. To compare across classes, researchers may want to use written feedback first in one class and video feedback first in another. While this may allow researchers to compare across classes and mitigate the problem presented by the order of the feedback form, other variables remain.

While it may be tempting to only ask whether video feedback is superior to traditional modes, we suggest that instructors also consider how this method supplements written feedback through an integration of technology in educational environments (Basu Conger 2005). Because student response to veedback was overwhelmingly positive–and despite technological issues, students preferred this form of engagement to traditional written comments—we intend to continue to evaluate how veedback may improve student learning and enrich teaching. The following student comment reminds us that taking the time for innovation with digital teaching technologies is valuable to student learning and doesn’t fall on deaf ears: “It was a very unique feedback process that helped considerably. I know it’s time consuming but more of this on other assignments would be great!”

Bibliography

Agarwal, Amit. 2011. “What’s the Optimum Length of an Online Video.” Digital Inspiration, February 17. http://www.labnol.org/.

Anson, Chris M. 1989. Writing and Response: Theory, Practice, and Research. Urbana, IL: National Council of Teachers of English. ISBN 9780814158746.

———. 2011. “Giving Voice: Reflections on Oral Response to Student Writing.” Paper presented at the Conference on College Composition and Communication, Atlanta, GA.

Basu Conger, Sharmila. 2005. “If There Is No Significant Difference, Why Should We Care?” The Journal of Educators Online 2 (2). http://www.thejeo.com/Archives/Volume2Number2/CongerFinal.pdf.

CCCC. 2004. “CCCC Position Statement on Teaching, Learning, and Assessing Writing in Digital Environments.” http://www.ncte.org/cccc/resources/positions/digitalenvironments.

Chickering, Arthur W., and Stephen C. Ehrmann. 1996. “Implementing the Seven Principles: Technology as Lever.” AAHE Bulletin 49, no. 2: 3-6. ISSN 0162-7910.

Clements, Peter. 2006. Teachers’ Feedback in Context: A Longitudinal Study of L2 Writing Classrooms. PhD diss., University of Washington. https://digital.lib.washington.edu/researchworks/handle/1773/9322.

Community College Survey of Student Engagement (CCSSE). (n.d.). http://www.ccsse.org/.

Coupland, Nikolas, Howard Giles, and John M. Wiemann. 1991. “Miscommunication” and Problematic Talk. Newberry Park, CA: Sage Publications. ISBN 9780803940321.

Davis, Anne, and Ewa McGrail. 2009. “‘Proof-revising’ with Podcasting: Keeping Readers in Mind as Students Listen To and Rethink Ttheir Writing.” Reading Teacher 62 (6): 522-529. ISSN 0034-0561.

Duvall, Annette, Ann Brooks, and Linda Foster-Turpen. 2003. “Facilitating Learning Through the Development of Online Communities.” Presented at the Teaching in the Community Colleges Online Conference.

Ertmer, Peggy A, Jennifer C Richardson, Brian Belland, Denise Camin, Patrick Connolly, Glen Coulthard, Kimfong Lei, and Christopher Mong. “Using Peer Feedback to Enhance the Quality of Student Online Postings: An Exploratory Study.” Journal of Computer‐Mediated Communication 12, no. 2 (January 1, 2007): 412-433. http://jcmc.indiana.edu/vol12/issue2/ertmer.html.

Evans, Darrell J. R. 2011. “Using Embryology Screencasts: A Useful Addition to the Student Learning Experience?” Anatomical Sciences Education 4 (2): 57-63. ISSN 1935-9772.

Falconer, John L., Janet deGrazia, J. Will Medlin, and Michael P. Holmberg. 2009. “Using Screencasts in ChE Courses.” Chemical Engineering Education 43 (4): 302-305. ISSN 0009-2479.

Furman, Rich, Carol L. Langer, and Debra K. Anderson. 2006. “The Poet/Practitioner: A New Paradigm for the Profession.” Journal of Sociology and Social Welfare 33 (3): 29-50. ISSN 0191-5096.

Georgakopolou, Alexandra, and Dionysis Goutsos. 2004. Discourse Analysis: An Introduction. Edinburgh: Edinburgh University Press. ISBN 9780748620456.

Holmes, Bryn, and John Gardner. 2006. E-Learning: Concepts and Practice. Sage Publications Ltd. ISBN 9781412911108.

Lee, Mark J. W., Sunam Pradhan, and BarneyDalgarno. 2008. “The Effectiveness of Screencasts and Cognitive Tools as Scaffolding for Novice Object-Oriented Programmers.” Journal of Information Technology Education 7: 61-80. ISSN 1547-9714.

Liou, H.-C., and Z. -Y. Peng. 2009. “Training Effects on Computer-Mediated Peer Review.” System 37, (3): 514-525. doi:10.1016/j.system.2009.01.005.

NSSE Home. (n.d.). http://nsse.iub.edu/.

Notar, C. E., J. D. Wilson, and K. G. Ross. 2002. “Distant Learning for the Development of Higher-Level Cognitive Skills.” Education 122: 642-650. ISSN 0013-1172.

Nurmukhamedov, Ulugbek, and Soo Hyon Kim. 2010. “‘Would You Perhaps Consider …’: Hedged Comments in ESL Writing.” ELT Journal: English Language Teachers Journal 64 ( 3): 272-282. doi:10.1093/elt/ccp063.

Palaigeorgiou, George, and Theofanis Despotakis. 2010. “Known and Unknown Weaknesses in Software Animated Demonstrations (Screencasts): A Study in Self-Paced Learning Settings.” Journal of Information Technology Education 9: 81-98. ISSN 1547-9714.

Russell, Thomas L. 2010. “The No Significant Difference Phenomenon.” NSD: No Significant Difference. http://www.nosignificantdifference.org/.

Scott, Jeremy. 2009. “Online Video Continues Ridiculous Trajectory.” ReelSEO: The Online Video Business Guide. http://www.reelseo.com/online-video-continues-ridiculous-trajectory/.

SkyworksMarketing. 2010. “What’s the best length for an internet video?” SkyworksMarketing.com, February 2. http://skyworksmarketing.com/right-video-length/.

Thurlow, Crispin, Laura B. Lengel, and Alice Tomic. 2004. Computer Mediated Communication: Social Interaction and the Internet. Thousand Oaks, CA: Sage. ISBN 9780761949534.

Vondracek, Mark. 2011. “Screencasts for Physics Students.” Physics Teacher 49 (2): 84-85. ISSN 0031-921X.

Wallace, Patricia M. 2004. The Internet in the Workplace: How New Technology Is Transforming Work. New York: Cambridge University Press. ISBN 9780521809313.

White, Edward M. 2006. Assigning, Responding, Evaluating: A Writing Teacher’s Guide. Boston: Bedford/St. Martin’s. ISBN 9780312439309.

Yee, Kevin, and Jace Hargis. 2010. “Screencasts.” Turkish Online Journal of Distance Education 11 (1): 9-12. ISSN 1302-6488.

About the Authors

Riki Thompson is an Assistant Professor of Rhetoric and Composition at the University of Washington Tacoma. Her research takes an interdisciplinary approach to explore the intersections of the self, stories, sociality, and self-improvement. Her scholarship on teaching and learning draws upon discourse, narrative, new media, and composition studies to reflect upon, assess, and improve methods for using digital technology in the classroom.

Meredith J. Lee is currently a Lecturer at Leeward Community College in Pearl City, HI. Her pedagogy and scholarship draws upon discourse, rhetorical genre studies, composition studies, sociolinguistics, and developmental education. Dr. Lee’s work also reflects her commitment to open access education.

Notes